Training NER Models in IRI DarkShield

Natural Language Processing (NLP) task wizards in the IRI Workbench GUI for DarkShield are designed to help you improve the accuracy of finding PII in unstructured sources using content-aware search matchers, called Data Matchers, during DarkShield data discovery operations. The results from NLP and other supported search matchers are serialized in PII location annotation and log files which are used for data classification, masking, and reporting.

To make these NLP jobs more efficient, DarkShield can use – and help you train using Machine Learning – Apache OpenNLP, PyTorch, or TensorFlow models for Named Entity Recognition (NER). NER models make it easier to find proper nouns like people’s names within text files and documents) because they recognize terms from English (or other language) sentence grammar.

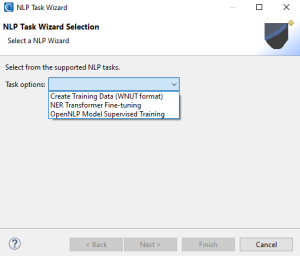

This article documents the currently supported NLP task wizards in DarkShield, which include:

- Transformer Training Data (WNUT format) Creation

- NER Transformer Fine-tuning

- OpenNLP Model Supervised Training

From DarkShield menu in the top toolbar of IRI Workbench, you can select from this list:

After making your choice in the drop-down box on this top-level page, click Next.

Preprocessing Training Data (WNUT format)

This wizard assists in the creation of training data that can be used in the fine-tuning of Named Entity Recognition models. These NER models are referred to as transformers.

Transformers require datasets referred to as training data during the process of fine-tuning. The task selection Create Transformer Training Data helps you produce training data, in the correct format, which is required for the fine-tuning.

Training data is produced through a multi-step process:

- Raw text from a text file is split into sentences using various sentence segmentation techniques.

- Each sentence is then processed and tokenized so that each word in the sentence is assigned a label (e.g. Person, Location, Organization…)

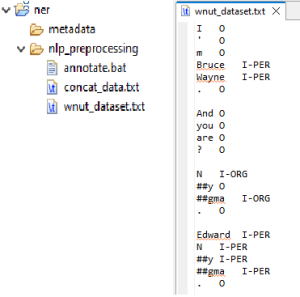

- Annotated sentences are translated into Workshop of Noisy User-generated Text (WNUT) format.

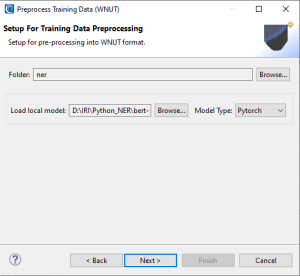

The first page is the setup page for the NLP task. You must indicate the folder that the batch script and resulting training data will be placed inside. Next, choose a NER model that will be used in the preprocessing task.

The loaded pretrained model will be used to annotate the raw text. Therefore, the model used to annotate the training data and the model that will be fine-tuned should either be the same, or possess the same label identifiers.

After choosing a model, indicate the model type. Currently, supported model-type frameworks are PyTorch and TensorFlow. Then click Next.

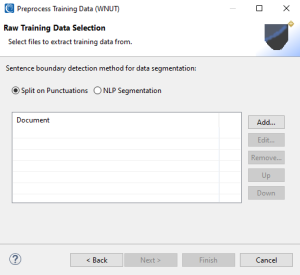

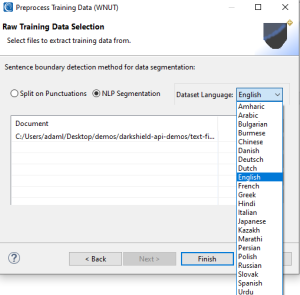

On the next page, you will provide text documents that are in raw, free-floating text format. These documents will be compiled into one large dataset and split apart by sentence.

To split by sentence, an NLP task called sentence boundary detection must be performed. Two methods are available to achieve this: split on punctuations, or natural language processing to detect boundaries of sentences.

Split on Punctuations

As the name implies, this option splits free-floating text into separate sentences by relying on punctuations (.?!) as delimiters. It is fast and simple to apply but can lead to false positives.

For example the sentence “Dr. Wood met Mr. Smith at the clinic today.”, would be split into three parts, “Dr “, “Wood met Mr”, “Smith at the clinic today”.

Relying on punctuation as sentence delimiters can also be fraught due to varying uses of punctuation in different written languages.

NLP Segmentation

Using an open-source library called pySBD, short for Python Sentence Boundary Disambiguation, rule-based segmentation can be applied to text. This method provides advantages over splitting on punctuation because it supports 22 different languages.

After selecting the desired sentence boundary detection method, click Add… to browse for and add plain text documents. Use the Edit… button to adjust your selection and Remove… to remove documents.

Once done, click Finish to produce a script that will execute the production of the training data.

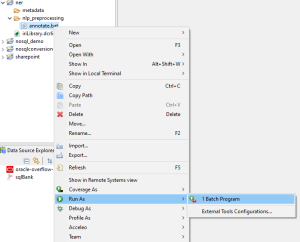

Right-click on the produced batch script and select Run As > Batch Program. The script will run and produce a dataset for training purposes in WNUT format.

Note that the process of creating the training dataset can take several minutes to hours depending on the size of the training data provided.

Transformer Model Trainer

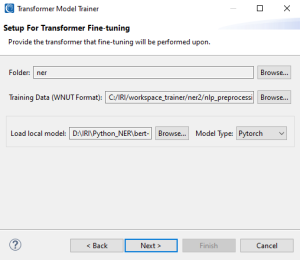

This wizard assists in the fine-tuning of PyTorch and TensorFlow NER models to improve the accuracy of PII search results. As mentioned, PyTorch and TensorFlow are machine learning frameworks.

For better accuracy and performance, it is expected that these pretrained models will be trained on datasets specific to the NER tasks you need to run. In other words, pretrained models should be fine-tuned with data similar to what will be searched in normal operations.

Hugging Face is an American company noted for its transformers library built for natural language processing (NLP) applications. Its platform allows users to share machine learning models and datasets.

Hugging Face transformers give DarkShield the ability to utilize AI-powered search matchers to discover and categorize sensitive information like names, places, and organizations. The HuggingFace Model Hub provides access to thousands of pretrained models for a variety of use cases and languages.

The steps on the first page of the Transformer Model Trainer wizard are the same as the steps on the first page of the Preprocess Training Data (WNUT) wizard.

Once finished click Next to move to the next page of the wizard where the data set fine-tuning begins.

On this page, the fine-tuning of training data is a multi-step process.

Steps:

- Review the words that have been organized and assigned labels in each individual folder tab at the bottom. Each folder tab represents a type of entity and provides both a scroll bar and a search box. The search entry will filter words by matches to words starting with characters provided in the search box or by words ending with characters entered into the search box if .* precedes characters in the search box. ( .*rot matches to carrot, parrot, rot )

- If any words need to be edited or modified, select the word and click Edit to open a dialog that will allow the modification of the original text value.

- Any misassignment of words to labels can be undone by selecting a word and then clicking Unlabel. This will un-assign the word and place it in a pool of words under Unlabeled words.

- Words placed in the Unlabeled words table can be reassigned to different labels by selecting the desired folder tab associated with a label, selecting the desired word in the Unlabeled words table, and clicking Label to reassign the word to a different label.

- Once reassignments are finished, click Next 1000 Sentences… to process the next chunk of sentences, or click Complete.

- Repeat steps 1-5 as many times as necessary until you decide to click Complete.

- Once fine-tuning of data is complete, click the Finish button at the bottom.

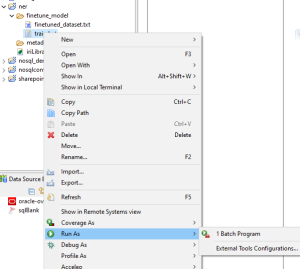

After the wizard page is completed a file called finetuned_dataset.txt will be produced along with a batch script containing instructions to train a model with the newly produced dataset. Right-click on the produced batch script and select Run As > Batch Program.

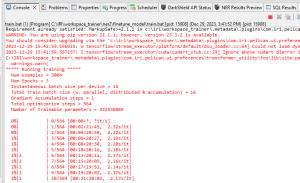

The batch script will instruct a model to start the training process using the fine-tuned dataset. This process can take several minutes to hours based on the size of the finetuned_dataset.txt file. You can track the progress of the training from the console log; for example:

When training is complete, the console log will report the training has successfully finished and a new model that has been trained on the data will be produced.

Console log reports training has successfully finished

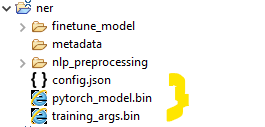

Once the new model is created, place the model.bin along with the config.json file that was produced inside a folder. At this point, the newly trained model can now be assigned to data classes and used by DarkShield for NER matching processes.

After training is completed these files are produced.

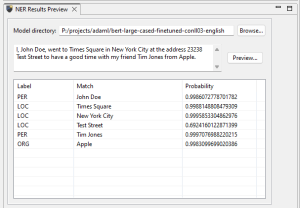

NER Results Preview

In the Workbench there is a view called NER Results Preview. This panel allows you to test the accuracy and performance of NER models on free-floating text. The text will be categorized based on the context of the sentence and words will receive labels and a probability score.

NER Results Preview Workbench View

If you have any questions or need help using these wizards to improve your search results, please email darkshield@iri.com.