Big Data

Big Data

What’s New in CoSort 10.5?

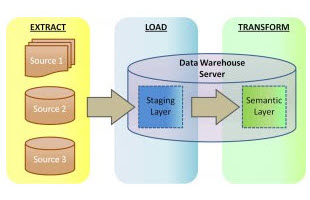

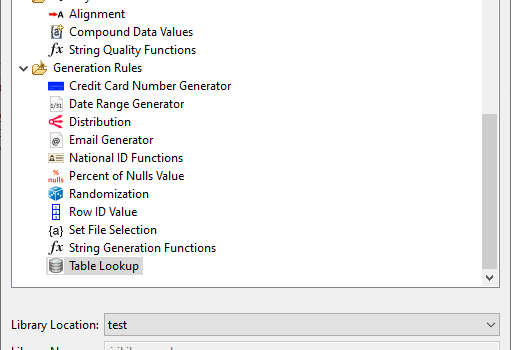

AbstractThree years after the release of CoSort V10, a significant interim update to IRI’s primary data transformation software package has been announced. This article summarizes what’s new in the CoSort high-performance data sorting and ETL tool since Version 10.0.1 was introduced. Read More