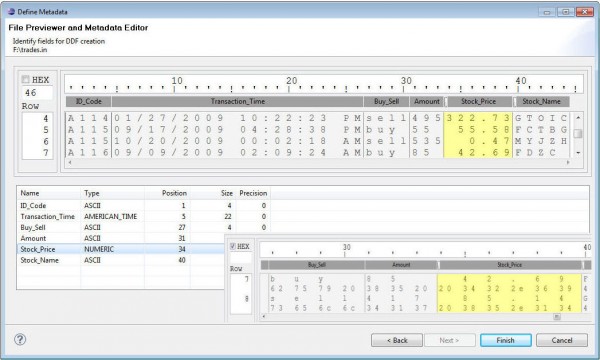

Data Profiling: Discovering Data Details

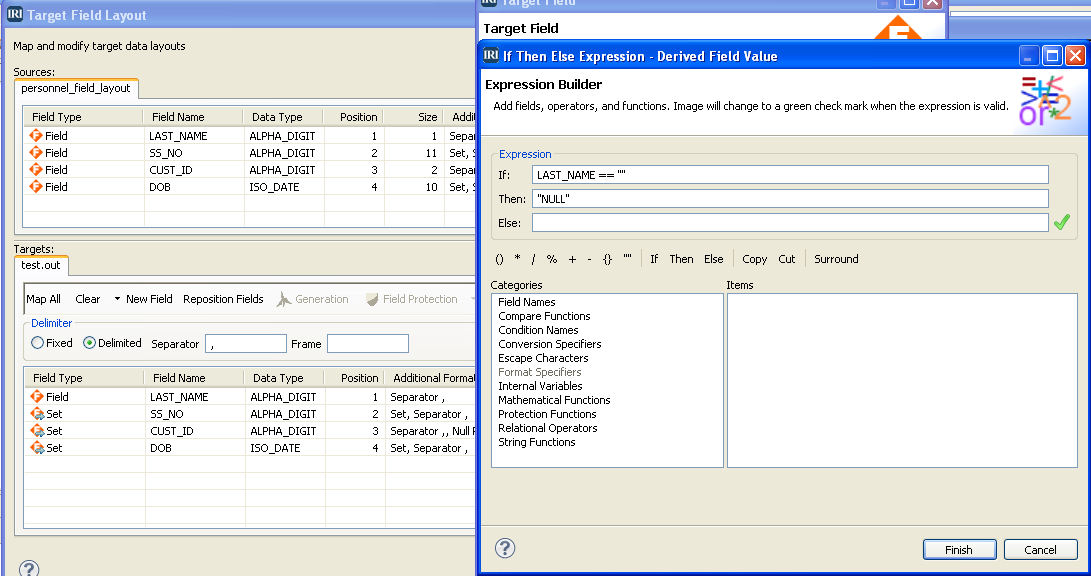

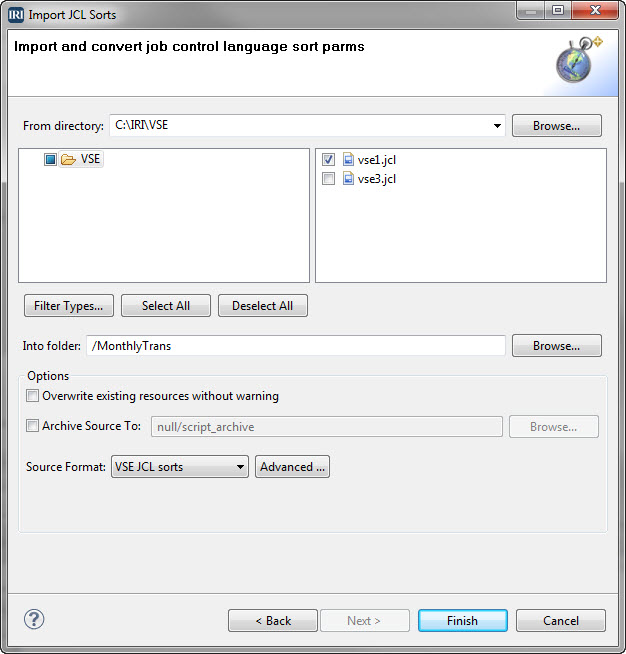

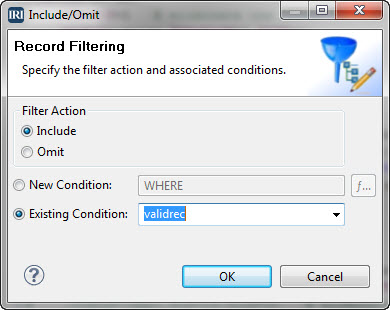

Data profiling, or data discovery, refers to the process of obtaining information from, and descriptive statistics about, various sources of data. The purpose of data profiling is to get a better understanding of the content of data, as well as its structure, relationships, and current levels of accuracy and integrity. Read More