Using Selection to Reduce Data Bulk (and Improve Data…

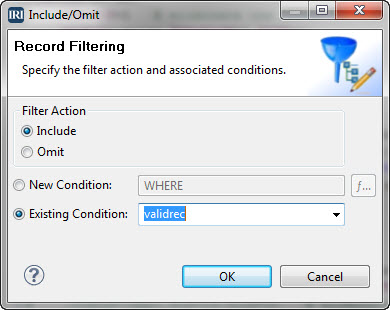

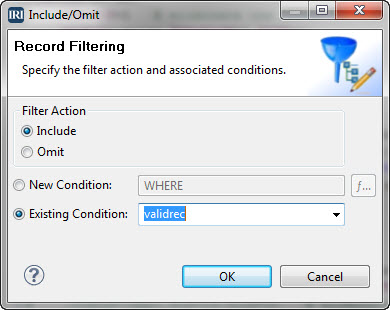

One of the best ways to speed up big data processing operations is to not process so much data in the first place; i.e. to eliminate unnecessary data ahead of time. Read More

One of the best ways to speed up big data processing operations is to not process so much data in the first place; i.e. to eliminate unnecessary data ahead of time. Read More

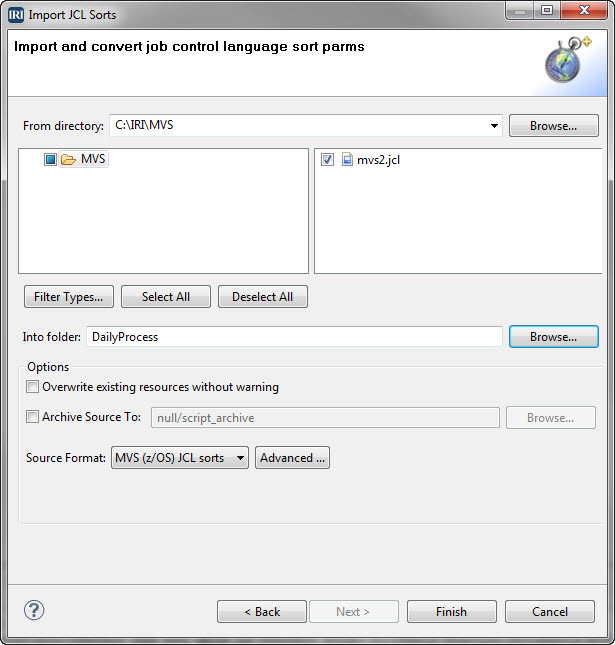

MVS, short for Multiple Virtual Storage, is the original operating system for IBM mainframe computers that is now z/OS. Its shell scripting or job control language (JCL), instructs the system how to run batch jobs or start subsystems. Read More

In 1992, Digital Equipment Corporation (DEC, long since acquired) asked IRI to develop a 4GL interface to CoSort in the syntax of the VAX VMS sort/merge utility. Read More

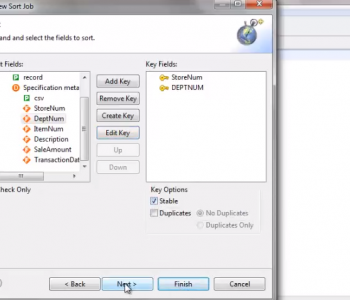

This demonstration shows how to set up a sort job for CoSort using the IRI Workbench. The sort is accomplished using the SortCL language. This video takes a CSV input file, shows how to define the sort keys and options, and demonstrates how to define the targets for output. Read More

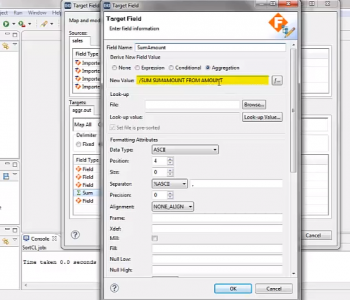

This demonstration shows how to use the IRI Workbench to create an aggregation job using sums. Workbench is used to create the job script in the SortCL language. Read More

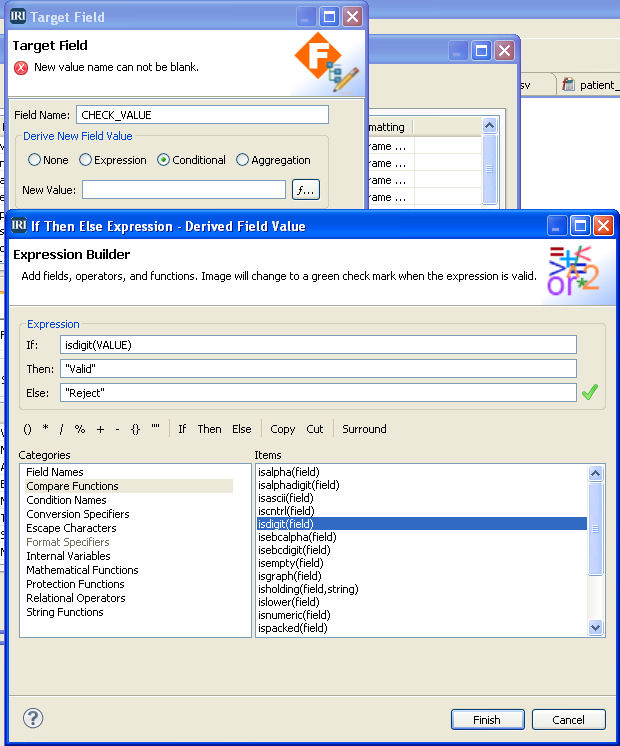

Data validation is a process that ensures a program operates with clean, correct and useful data. It uses routines known as validation rules that systematically check for correctness and meaningfulness of data that are entered into the system. Read More

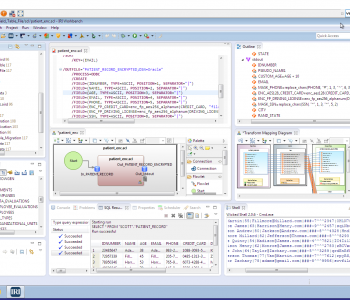

IRI Workbench is a powerful, and free integrated development environment (IDE) built on Eclipse designed for the user-friendly creation, management, and deployment of jobs in most IRI software products. Read More

Data Management and Protection Expertise – IRI Professional Services Now Open

In response to customer requests for bespoke applications across a broad range of data processing requirements, IRI now has an in-house business unit for the implementation of custom data transformation, ad hoc reporting, and data security solutions. Read More

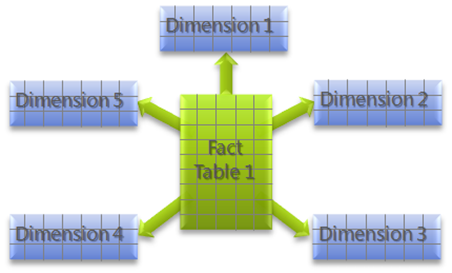

Star schema is the simplest and most common database modelling structure used in traditional data warehouse paradigms. The schema resembles a constellation of stars — generally several bright stars (facts) surrounded by dimmer ones (dimensions) where one or more fact tables reference different dimension tables. Read More

Encryption key management is one of the most important “basics” for an organization dealing with security and privacy protection. Major data losses and regulatory compliance requirements have prompted a dramatic increase in the use of encryption within corporate data centers. Read More

ODBC, Open Database Connectivity, is an industry-standard application programming interface (API) for access to both relational and non-relational database management systems (DBMS). ODBC was first developed by the SQL Access Group (SAG) in 1992 in response to the need to access data stored in a variety of proprietary personal computer, minicomputer, and mainframe databases without having to know their proprietary interfaces.