How to Tokenize Credit Card Data

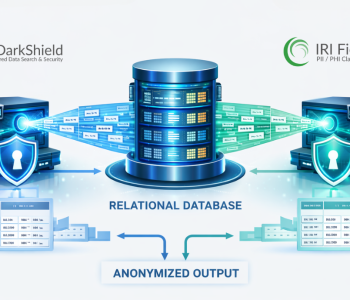

Abstract: This article explains a method of protecting credit card data with tokenization using the IRI FieldShield data masking tool.

Submitting one’s credit card details electronically can be disconcerting (e.g., via physical scanner or internet purchase). The personally identifiable information it contains can lead to fraudulent charges, identify theft, and so on.

To provide better security, a standard was made to protect users when providing credit card numbers online. This standard is called the Payment Card Industry Data Security Standard, or PCI DSS.

PCI DSS is a global standard and requires that any business must adhere to its rules if they would store, process, transmit cardholder data, and/or accept payment cards. Encryption of the credit card or ‘Primary Account Number ‘ (PAN) is one way to comply with PCI DSS; another is tokenization.

In an effort to make the tokenized numbers more secure, IRI uses Open Database Connectivity (ODBC) to control the database. Doing this allows Windows Authentication NT to be used when logging into a database.

IRI advises that Windows Authentication, instead of SQL Server Authentication, be chosen as the access method for the database that holds the card numbers. Using Windows Authentication allows the creator of the SQL Database to specify which users are allowed to access the database with their Windows login information.

PCI Tokenization vs. Encryption

Tokenization is similar to encryption, but does not mask based on the original PAN. Instead, it uses other methods such as a random salt or an index to mask PAN values by substituting the original PAN with a token value.

The advantage of tokens is their uniqueness. When a token is created, it is only used in one location, thus eliminating the possibility of multiple sources using that same token. With encryption, the PAN is transformed through an algorithm and still holds the original data.

PCI DSS Compliance and Data Masking Tools

IRI has created a function in the FieldShield data masking tool for those wishing to tokenize credit card (or other) values. In the PCI DSS context, the only requirement is a 16-digit card number. The tokenization function is ODBC compliant, and can be configured to work with any SQL database.

The above flowchart illustrates how tokenization control flow works. Input consists of a 16-digit PAN that can be a literal value, or one that is passed from a file. An example of the script that can be called to use the tokenization function would be:

/FIELD=(FIELDDATA=pci_assign_token(CardNumber), TYPE=ASCII, POSITION=1, SEPARATOR="\n")In the example above, the only parameter for the function call is CardNumber, a string consisting of 16 numbers.

The function checks the assigned database for the card number. If it is not present, the card number is tokenized, inserted into the database, and then passed back to FieldShield. Similarly, if the card number is found in the database, the corresponding tokenized number is passed back to FieldShield, also allowing the user to write the tokenized values to a file.

Additional functions, like format-preserving encryption, can also be implemented automatically in the FieldShield context ahead of tokenization for an additional level of security in the token vault / database, or after de-tokenization to protect the PAN clear-text from “unauthorized” recipients.

End Note: As you consider the best tokenization tools for payment security for your use case, you may wish to investigate FieldShield and the other top data masking tools in the IRI Data Protector Suite here. and IRI reference customers in the Banking, Financial Services and insurance industry here.