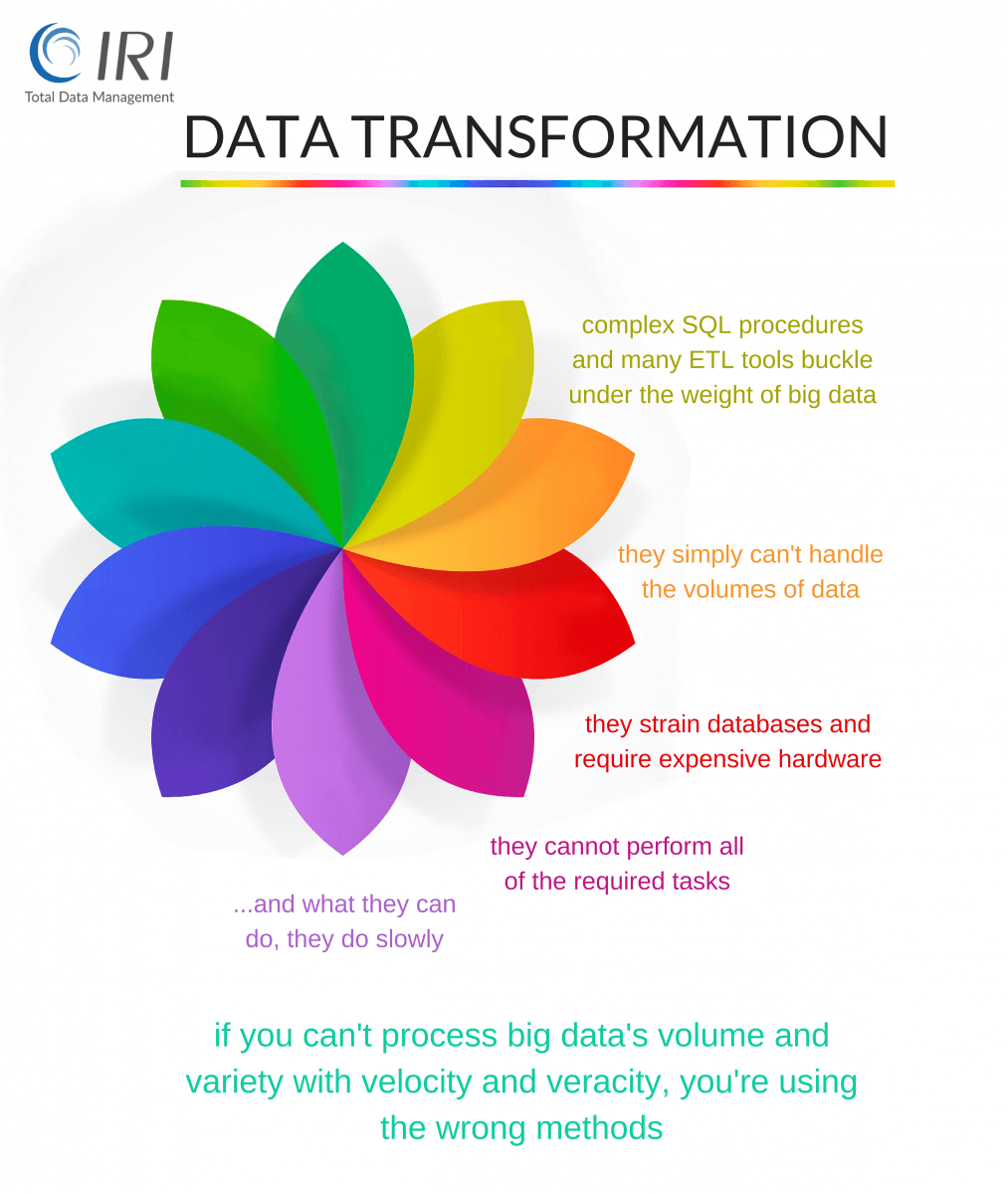

Looking at Data Transformation Tools?

ETL tools, Perl scripts, custom programs, and SQL procedures can be slow, costly, and complex data transformation solutions. Unfamiliar GUIs and coding make job specification difficult, and runtimes suffer under the weight of big data. Can you:

- transform huge data volumes without using your DB, or changing your IT fabric; e.g., without Hadoop, NoSQL, or an ELT appliance?

- leverage multiple CPUs and cores for big jobs, run several tasks in the same I/O, and dynamically allocate resources to optimize performance?

- easily understand, share, and modify your data and job definitions without a major learning curve?

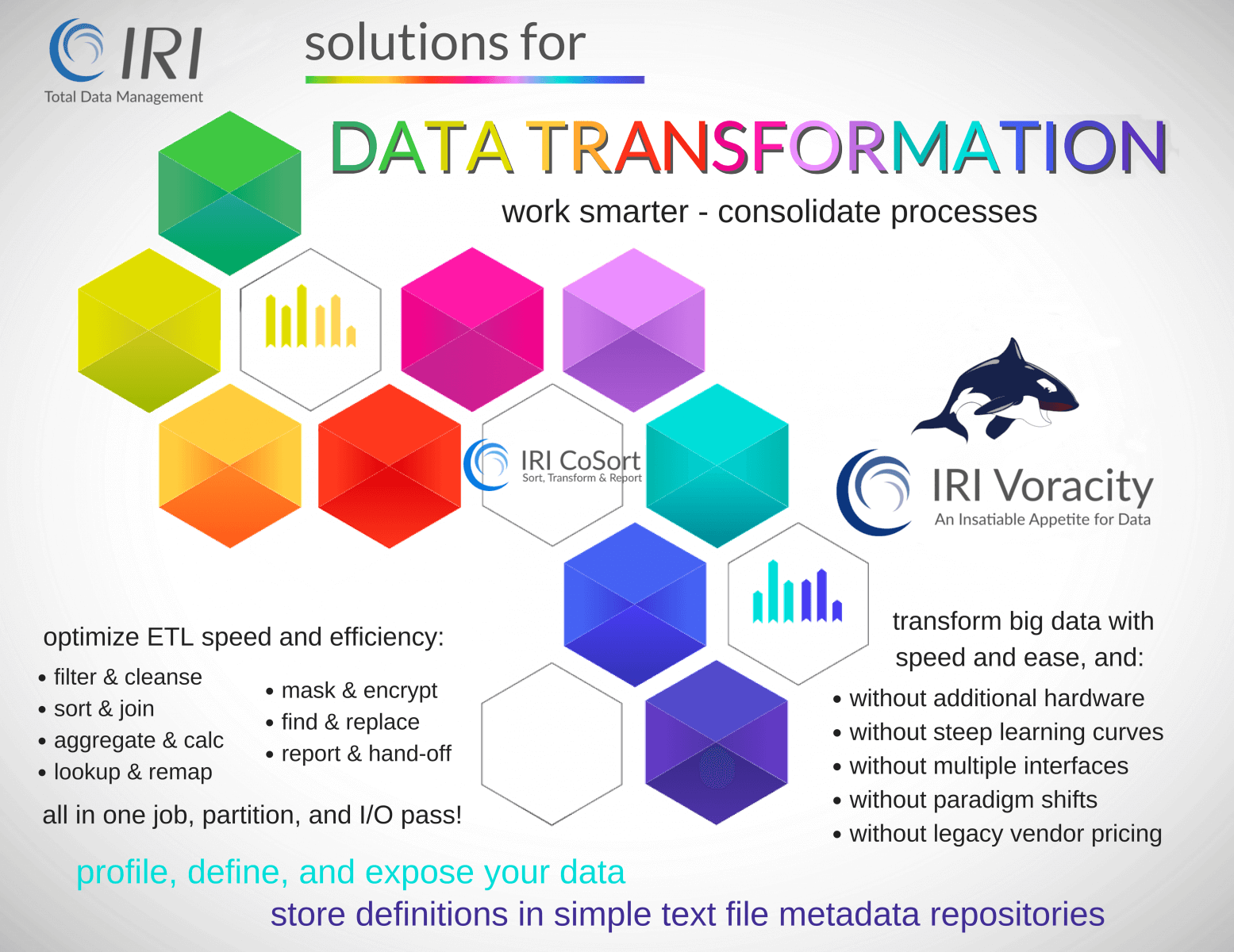

The 4GL SortCL program inside both the IRI CoSort package and IRI Voracity platform is a long-proven (and visually configurable) big data transformation engine that does the heavy lifting in the world's largest data warehouses, operational data stores, core banking applications, clickstream data webhouses, data lakes, and more.

And if you do use Hadoop, you can still run many of the same transformations defined in SortCL jobs seamlessly in MapReduce, Spark, Spark Stream, Storm, and Tez.

Consolidate Tasks

Optimize ETL operations as you combine sorts, joins, and aggregates in a single job script, partition, and I/O pass. At the same time, de-duplicate and filter, convert and re-map, lookup, rank, (de-)normalize, calculate, shift, encrypt to protect, and mask to re-cast.

Transform large volumes of data in many different table and file sources together. Discover, define, and expose your data and manipulation definitions in simple text file metadata repositories you can manage in the free IRI Workbench GUI, built on Eclipse. Use named fields for the mappings, as you:

- Map sources to targets

- Reduce script sizes and creation times

- Facilitate reorg and ETL operations

- Produce load and file-compare metadata

Expand Capabilities

Do all the same transforms that slower and more complex SQL procedures or ETL tools do, and perform:

- change data capture

- row-column rotation (pivot/unpivot)

- slowly changing dimension reporting

- star (or snowflake) schema targeting

- static, structured, running, and windowed aggregates

- discrete and operative value lookups

- format mass and other value modifications

- data cleansing

- data protection (masking, encryption, pseudonymization, etc.)

Create custom reports, and hand off pre-processed subsets to the files, tables, and federated views that your applications, data marts and BI users can leverage immediately.

Use Existing Metadata

SortCL and related facilities in the CoSort package accept many third-party data layouts, e.g., DB DDL, COBOL copybooks, CSV, LDIF and XML files, CLF and ELF web logs, ASN.1 CDRs, MQTT IoT streams, JCL sort parrms, and bulk DB loader file metadata. SortCL job scripts contain SQL-familiar commands that use and/or reference the layouts. Its GUI discovers and generates those layouts automatically.

Third-party metadata interchange and generation platforms including MITI MIMB, Data Switch, and ERwin (Quest) also generate SortCL-compatible metadata from popular BI, ETL, modeling, and other tool environments. This means you can levearge the metadata you already have for data transformation, reporting, and data conversion services using SortCL and other IRI Voracity data management platform solutions.

Interoperate & Accelerate

SortCL transformations work hand-in-hand with data extraction and loading utilities. For example, SortCL can flat VLDB data unloaded from IRI FACT (Fast Extract), and pipe it pre-sorted into database load utilities like SQL*Loader. SortCL can also connect to other files, tables, and sheets on-premise or in the clodu to acquire and deliver data.

SortCL transforms can run alongside ETL tools like Informatica and DataStage, to optimize their performance. SortCL jobs run on the command line, in batch scripts, from 3GL programs, via API calls, or in the IRI Workbench GUI, built on Eclipse™. You can easily embed these transforms to accelerate your applications the same way Kalido, Terastream, General Dynamics and Minsait do, to name a few.

SortCL exploits CoSort's granular speed tuning and flexible CPU licensing. IRI's continuing innovation in parallel data movement, I/O and memory management, data manipulation functionality and consolidation -- along with our meaningful industry partnerships -- keep you at the leading edge of big data transformation.

Browse the Data Transformations that you can accomplish and combine in SortCL above. Learn more below:

Blog Links

Product Reviews